All-Around AI

“In just 4 months, from 02 August 2025 providers of general-purpose AI models will need to comply with significant obligations including in relation to the transparency of their models, detailing training material which could expose them to copyright infringement allegations. However, since penalties cannot be imposed until 02 August 2026, providers may feel a sense of reprieve. ”

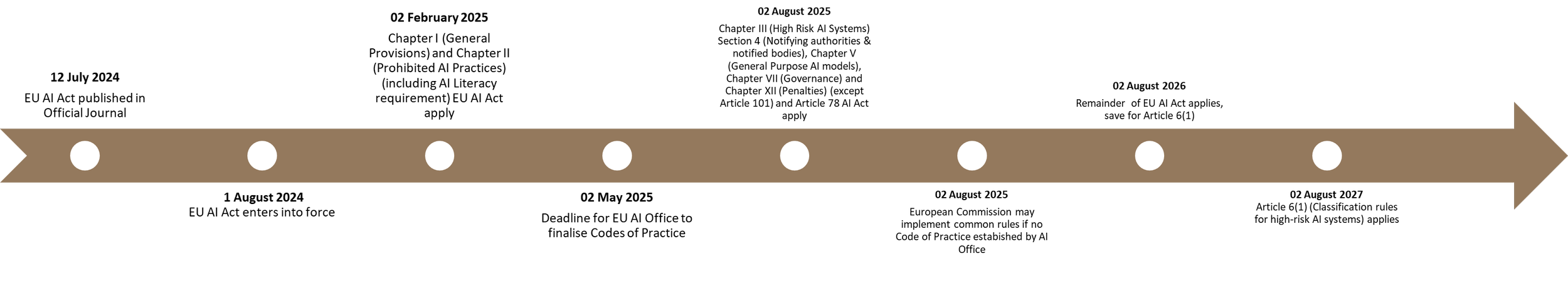

The implementation of the EU AI Act has been staggered to target first those AI systems deemed to pose unacceptable levels of risk and which should be prohibited, then general-purpose AI systems and lastly high-risk AI systems.

EU AI Act Implementation Timeframe

Chapters I (General provisions) and II (Article 5: Prohibited AI practices) EU AI Act took effect on 02 February 2025, bringing into force the ban on certain AI systems, models and use cases offered for use or used in the EU or affecting persons in the EU, as well as the positive obligation on providers and deployers of AI systems to use best endeavours to ensure that those they entrust to operate and use AI systems have sufficient AI literacy.

AI Act Codes of Practice are required to be prepared by the AI Office by 02 May 2025, failing which the European Commission can implement common rules. On 11 March 2025, a third draft of the General-Purpose AI Code of Practice was published, detailing the commitments that signatories would make which would enable them to demonstrate the fulfilment of their obligations

Several provisions of the EU AI Act shall apply in just four months’ time, from 02 August 2025, including those targeting general-purpose AI systems.

Chapter III Section 4

Chapter III (High Risk AI Systems) Section 4 (Notifying authorities & notified bodies) (Articles 28-39 EU AI Act), which establishes the requirement on Member States to designate notifying authorities for the assessment, designation and notification of conformity assessment bodies and for their monitoring, as well as the notified conformity assessment bodies.

Chapter V

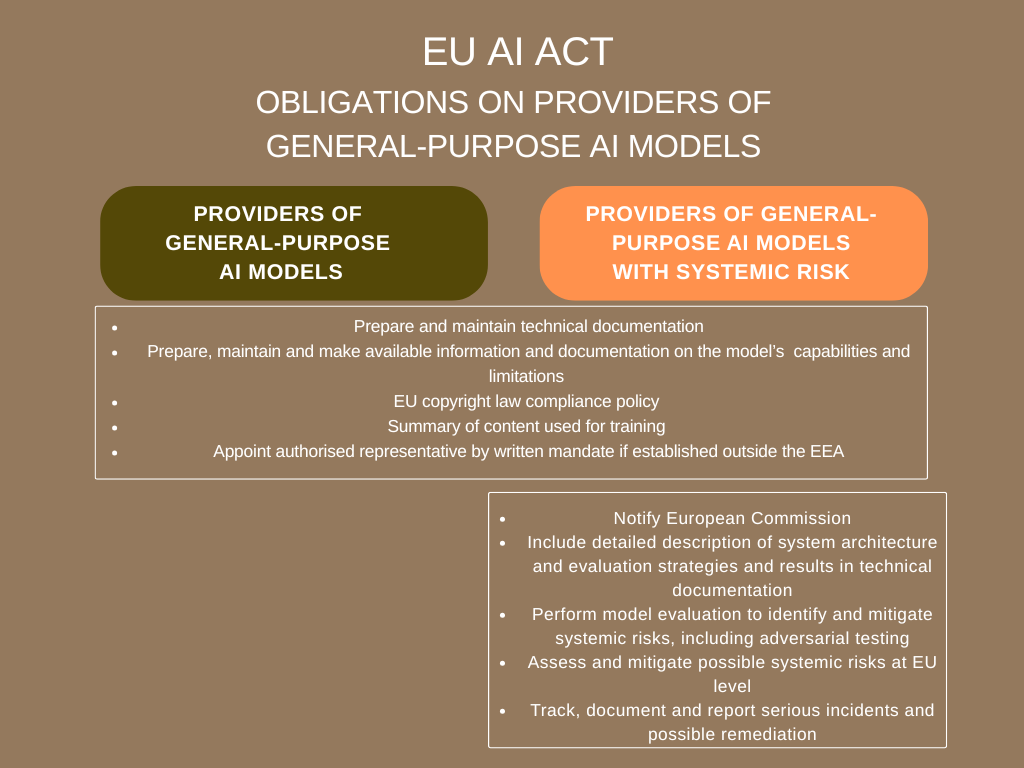

Chapter V (General Purpose AI models) AI Act establishes 2 categories of general-purpose AI models: general-purpose AI models; and, general-purpose AI models with systemic risk, with the latter being subject to more stringent measures.

A general-purpose AI model is defined at Article 3(63) AI Act as being an “AI model, including where such an AI model is trained with a large amount of data using self-supervision at scale, that displays significant generality and is capable of competently performing a wide range of distinct tasks regardless of the way the model is placed on the market and that can be integrated into a variety of downstream systems or applications, except AI models that are used for research, development or prototyping activities before they are placed on the market”.

General-purpose AI models with systemic risk (defined as risk specific to the high-impact capabilities of general-purpose AI models, having a significant impact on the Union market due to their reach, or due to actual or reasonably foreseeable negative effects on public health, safety, public security, fundamental rights, or the society as a whole, that can be propagated at scale across the value chain, see Article 3(65) AI Act) are those that either have high-impact capabilities, i.e. capabilities that match or exceed the capabilities recorded in the most advanced general-purpose AI models or where the cumulative computation used for training exceeds a defined threshold, or are determined by the European Commission to have equivalent capabilities or impact. This definition is likely to include publicly available generative AI models (see Recital 99, AI Act).

The obligations on providers of general-purpose AI models are as follows, with cumulative obligations on providers of general-purpose AI models with systemic risk:

General-purpose AI models

Prepare, maintain and provide upon request to the EU AI Office and national authorities technical documentation comprising as a minimum: a general description of the general-purpose AI model including intended tasks, applicable acceptable use policies, release date and distribution, architecture and number of parameters, modality and format of inputs and outputs and, the licence; a detailed description of these elements, including technical, means of model integration, design specification, training process, training data, computational resources and, energy consumption (Article 53(1)(a) & Annex XI AI Act), unless the model is released under a free and open-source licence that allows for the access, usage, modification, and distribution of the model, and whose parameters, including the weights, the information on the model architecture, and the information on model usage, are made publicly available;

Prepare, maintain and make available information and documentation on: the capabilities and limitations of the model to providers of AI systems who intend to integrate the model into their system, including as a minimum a general description of the model, a general description of the general-purpose AI model including intended tasks, applicable acceptable use policies, release date and distribution, interaction with external hardware or software, relevant software version, architecture and number of parameters, modality and format of inputs and outputs and, the licence; and, a description of the elements of the model and its development including the technical means for implementation, the modality and format of inputs and outputs and their maximum size and, information on the data used for training, testing and validation, the type and provenance of data and curation methodologies (Article 53(1)(b) & Annex XII AI Act), unless the model is released under a free and open-source licence that allows for the access, usage, modification, and distribution of the model, and whose parameters, including the weights, the information on the model architecture, and the information on model usage, are made publicly available;

Establish a policy to comply with EU copyright law (Article 53(1)(c) AI Act);

Prepare and publish a sufficiently detailed summary of the content used for training in compliance with AI Office template;

Appoint by written mandate an authorised representative established in the EU if provider is established in a third country, which affords the representative authority to verify the provider has drawn up the necessary documentation and fulfilled its obligations under Article 53 AI Act, maintain a copy of the technical documentation and the contact details for the provider for at least 10 years after the model is placed on the market, provide the AI Office on request with the information and documentation necessary to demonstrate compliance, co-operate with the AI Office and competent authorities and, to be addressed by the AI Office or competent authorities in addition to or instead of the provider (Article 54 AI Act), unless the model is released under a free and open-source licence that allows for the access, usage, modification, and distribution of the model, and whose parameters, including the weights, the information on the model architecture, and the information on model usage, are made publicly available.

General-purpose AI models with systemic risk

Comply with the requirements for general-purpose AI models;

Provider under obligation to notify European Commission (Article 52(1) AI Act), which is then required to maintain and publish a list of providers of general-purpose AI models with systemic risk,

Include within the technical documentation a detailed description of evaluation strategies and results, any adversarial testing measures, any model adaptations including fine tuning and, a detailed description of system architecture

Perform model evaluation including adversarial testing to identify and mitigate systemic risks (Article 55(1)(a) AI Act);

Assess and mitigate possible systemic risks at EU level (Article 55(1)(b) AI Act);

Track, document and report information on serious incidents and possible corrective action to the AI Office and competent authorities (Article 55(1)(c) AI Act).

Demonstration of some of these requirements may be achieved through compliance with Codes of Practice.

Chapter VII (Governance)

Chapter VII (Articles 64 – 70 AI Act) address the function and operation of the AI Office, the European Artificial Intelligence Board, an Advisory Forum, a Scientific Panel of Independent Experts, as well as the designation by Member States of competent authorities comprising at least one notifying authority and one market surveillance authority.

Chapter XII (Penalties) (except Article 101)

Chapter XII (Penalties) (except Article 101), i.e. Articles 99 and 100 AI Act, establish the framework for penalties for non-compliance with the AI Act.

Fines for non-compliance with prohibited AI practices are punishable with fines of up to the greater of €35m or 7% of worldwide annual turnover for the preceding financial year.

Failure to comply with the obligations on providers (Article 16 AI Act), authorised representatives (Article 22 AI Act), importers (Article 23 AI Act), distributors (Article 24 AI Act), deployers (Article 26 AI Act), notified bodies (Article 31, Article 33(1), Article 33(3), Article 33(4) and Article 33(4) AI Act) and transparency obligations on providers and deployers (Article 50 AI Act) is punishable with fines of up to the greater of €15m or 3% of worldwide annual turnover for the preceding financial year.

In relation to SMEs, however, the fine shall be determined only by reference to the relevant % of worldwide annual turnover for the preceding financial year.

The European Data Protection Supervisor can impose fines on EU bodies and institutions for non-compliance.

Article 101 AI Act, which deals with fines for providers of general purpose AI models, will not come into force until 02 August 2026, despite their obligations coming into force a year earlier.

Article 78

Article 78 at Chapter IX Section 3 AI Act requires that all bodies and people involved in the implementation of the AI Act to respect the confidentiality of information and data.

The remainder of the EU AI Act takes effect from 02 August 2026, save that Article 6(1) AI Act (Classification rules for high-risk AI systems) shall apply later, from 02 August 2027.

If your organisation requires support in understanding how it will be impacted by the EU AI Act, the requirements of responsible AI development and deployment, or establishing an AI governance framework, conducting an algorithmic impact assessment, an artificial intelligence (AI) impact / risk assessment, fundamental rights impact assessment, conformity assessment, or related human rights, data protection, equality or community impact assessments, please contact us.

Find out more about our responsible and ethical artificial intelligence (AI) services.